创建简单数据集

import trees

myDat,labels = trees.createDataSet()

print("myDat:\n",myDat)

#计算香浓熵

trees.calcShannonEnt(myDat)

myDat:

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

0.9709505944546686

划分数据集

分类算法除了需要测量信息熵,还需要划分数据集,度量花费数据集的熵。

append与extend拼接

a=[1,2,3]

b=[4,5,6]

c=[1,2,3]

d=[4,5,6]

a.append(b)

print("a:",a)

c.extend(d)

print("c:",c)

a: [1, 2, 3, [4, 5, 6]]

c: [1, 2, 3, 4, 5, 6]

测试划分数据集

trees.splitDataSet(myDat,0,1)

[[1, 'yes'], [1, 'yes'], [0, 'no']]

trees.splitDataSet(myDat,0,0)

[[1, 'no'], [1, 'no']]

选择最好的划分特征

接下来我们将遍历整个数据集,循环计算香浓熵和划分数据集,找到最好的特征划分方式。

-

数据必须是一种由列表元素组成的列表,而且所有的列表元素都要具有相同的数据长度

-

数据的最后一列或者每个实例的最后一个元素是当前实例的类别标签

#结果为0表示第0特征是最好的划分数据集的特征

trees.chooseBestFeatureToSplit(myDat)

0

递归构建决策树

目前我们已经学习了从数据集构建决策树算法所需要的子功能模块,其原理为:得到原始数据集,然后基于最好的属性值划分数据集,由于特征值可能多于两个,因此可能存在大于两个分支的数据集划分。第一次划分之后,数据将被向下传递到树分支的下一个节点,在这个节点上,我们可以再次划分数据,因此我们可以采用递归的原则处理数据集。

递归的结束条件:程序遍历完所有划分数据集的属性,或者每个分支下的所有实例都具有相同的分类。如果所有实例具有相同的分类,则得到一个叶子节点或者终止块。任何到达叶子节点的数据必然属于叶子节点的分类。

采用多数表决决定该叶子节点的分类

majorityCnt函数使用分类名称的列表,然后创建键值为classList中唯一值的数据字典,字典对象存储了classList中每个类标签出现的频率,最后利用operator操作键值排序字典,并返回出现次数最多的分类名称。

创建树

from importlib import reload

reload(trees)

myDat,labels = trees.createDataSet()

myTree = trees.createTree(myDat,labels)

print("myTree:\n",myTree)

myTree:

{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}}

构造注解树

决策树的主要优点是直观易于理解,如果不能将其直观的显示出来,就无法发挥其优势。但Python并没有提供绘制树的工具,因此我们必须自己绘制树形图:treePlotter.py

使用决策树进行分类

现在我们将在真实数据上使用决策树分类算法,验证它是否可以正确预测出患者应该使用的隐形眼镜类型。

依靠训练数据构造了决策树之后,我们可以将它用于实际数据的分类。在执行数据分类时,需要决策树以及用于构造树的标签向量。然后,程序比较测试数据与决策树上的数值,递归执行该过程直到进入叶子节点;最后将测试数据定义为叶子节点所属的类型。

import treePlotter

myDat,labels=trees.createDataSet()

print("labels:\n",labels)

myTree = treePlotter.retrieveTree(0)

print("myTree:\n",myTree)

labels:

['no surfacing', 'flippers']

myTree:

{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}}

print("[1,0]分类结果:")

trees.classify(myTree,labels,[1,0])

[1,0]分类结果:

'no'

print("[1,1]分类结果:")

trees.classify(myTree,labels,[1,1])

[1,1]分类结果:

'yes'

存储决策树

构造决策树是很耗时的任务,可以利用创建好的决策树解决分类问题,使用pickle模块存储决策树。

def storeTree(inputTree,filename):

import pickle

fw = open(filename,'w')

pickle.dump(inputTree,fw)

fw.close()

def grabTree(filename):

import pickle

fr = open(filename)

return pickle.load(fr)

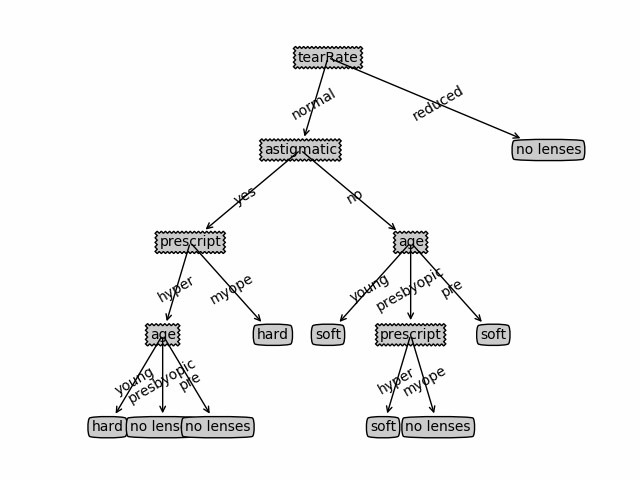

预测隐形眼镜类型

眼科医生是如何判断患者需要佩戴的镜片类型,一旦理解了决策树的工作原理,我们甚至也可以帮助人们判断需要佩戴的镜片类型。

import trees

import treePlotter

fr = open('lenses.txt')

lenses = [inst.strip().split('\t') for inst in fr.readlines()]

lensesLabels = ['age','prescript','astigmatic','tearRate']

lensesTree = trees.createTree(lenses,lensesLabels)

print("lensesTree:\n",lensesTree)

lensesTree:

{'tearRate': {'reduced': 'no lenses', 'normal': {'astigmatic': {'no': {'age': {'young': 'soft', 'presbyopic': {'prescript': {'hyper': 'soft', 'myope': 'no lenses'}}, 'pre': 'soft'}}, 'yes': {'prescript': {'hyper': {'age': {'young': 'hard', 'presbyopic': 'no lenses', 'pre': 'no lenses'}}, 'myope': 'hard'}}}}}}

执行treePlotter.createPlot(lensesTree),绘图:

图中决策树非常好地匹配了实验数据,然而这些匹配项可能太多了。我们将这种问题称为过度匹配。为了减少过度匹配,我们可以裁剪决策树,去掉一些不必要的叶子节点。如果叶子节点只能增加少许信息,则可以删除该节点,并将其并入其他叶子节点中。

后面还会学习决策树构造算法CART和C4.5,本文使用的是ID3算法。ID3算法无法直接处理数值型数据。

trees.py

下载trees.py

'''

Created on Oct 12, 2010

Decision Tree Source Code for Machine Learning in Action Ch. 3

@author: Peter Harrington

'''

from math import log

import operator

#创建简单数据集

def createDataSet():

dataSet = [[1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

#change to discrete values

return dataSet, labels

#计算给定数据集的香浓熵

def calcShannonEnt(dataSet):

numEntries = len(dataSet)

labelCounts = {}

for featVec in dataSet: #为所有可能分类创建字典

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys(): labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

shannonEnt -= prob * log(prob,2) #求对数

return shannonEnt

#划分数据集

#(待划分的数据集,划分数据集的特征,特征返回值)

def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis] #抽取

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

#选择最好的数据集划分方式

#(1)创建唯一的分类标签列表

#(2)计算每种划分方式的信息熵

#(3)计算最好的信息增益

def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0]) - 1 #the last column is used for the labels

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0; bestFeature = -1

for i in range(numFeatures): #iterate over all the features

featList = [example[i] for example in dataSet]#create a list of all the examples of this feature

uniqueVals = set(featList) #get a set of unique values

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy #calculate the info gain; ie reduction in entropy

if (infoGain > bestInfoGain): #compare this to the best gain so far

bestInfoGain = infoGain #if better than current best, set to best

bestFeature = i

return bestFeature #returns an integer

#多数表决决定该叶子节点的分类

def majorityCnt(classList):

classCount={}

for vote in classList:

if vote not in classCount.keys(): classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.iteritems(), key=operator.itemgetter(1), reverse=True)

return sortedClassCount[0][0]

#创建树

#输入:(数据集,标签列表)

#标签列表包含了数据集中所有的特征的标签,

#算法本身并不需要这个变量,

#但是为了给出数据明确的含义,我们把它作为一个输入参数提供

def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet]

if classList.count(classList[0]) == len(classList):

return classList[0]#类别完全相同则停止继续划分

if len(dataSet[0]) == 1: #遍历完所有特征时返回出现次数最多的

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]#得到列表包含的所有属性值

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:]#为了不改变原列表内容,使用新变量代替原列表

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value),subLabels)

return myTree

#使用决策树的分类函数

#比较testVec变量中的值与树节点的值,

#如果到达叶子节点,则返回当前节点的分类标签

def classify(inputTree,featLabels,testVec):

#firstStr = inputTree.keys()[0]#py2版本

firstSides = list(inputTree.keys())

firstStr = firstSides[0]#py3版本

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)#将标签字符串转换为索引

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else: classLabel = valueOfFeat

return classLabel

#构造决策树是很耗时的任务,

#可以利用创建好的决策树解决分类问题

#使用pickle模块存储决策树

def storeTree(inputTree,filename):

import pickle

fw = open(filename,'w')

pickle.dump(inputTree,fw)

fw.close()

def grabTree(filename):

import pickle

fr = open(filename)

return pickle.load(fr)

treePlotter.py

'''

Created on Oct 14, 2010

@author: Peter Harrington

'''

##############################

#利用matplotlib绘制决策树

###############################

import matplotlib.pyplot as plt

decisionNode = dict(boxstyle="sawtooth", fc="0.8")

leafNode = dict(boxstyle="round4", fc="0.8")

arrow_args = dict(arrowstyle="<-")

def getNumLeafs(myTree):

numLeafs = 0

#firstStr = myTree.keys()[0]

firstSides = list(myTree.keys())

firstStr = firstSides[0]#py3版本

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

numLeafs += getNumLeafs(secondDict[key])

else: numLeafs +=1

return numLeafs

def getTreeDepth(myTree):

maxDepth = 0

#firstStr = myTree.keys()[0]

firstSides = list(myTree.keys())

firstStr = firstSides[0]#py3版本

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

thisDepth = 1 + getTreeDepth(secondDict[key])

else: thisDepth = 1

if thisDepth > maxDepth: maxDepth = thisDepth

return maxDepth

def plotNode(nodeTxt, centerPt, parentPt, nodeType):

createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction',

xytext=centerPt, textcoords='axes fraction',

va="center", ha="center", bbox=nodeType, arrowprops=arrow_args )

def plotMidText(cntrPt, parentPt, txtString):

xMid = (parentPt[0]-cntrPt[0])/2.0 + cntrPt[0]

yMid = (parentPt[1]-cntrPt[1])/2.0 + cntrPt[1]

createPlot.ax1.text(xMid, yMid, txtString, va="center", ha="center", rotation=30)

def plotTree(myTree, parentPt, nodeTxt):#if the first key tells you what feat was split on

numLeafs = getNumLeafs(myTree) #this determines the x width of this tree

depth = getTreeDepth(myTree)

#firstStr = myTree.keys()[0] #the text label for this node should be this

firstSides = list(myTree.keys())

firstStr = firstSides[0]#py3版本

cntrPt = (plotTree.xOff + (1.0 + float(numLeafs))/2.0/plotTree.totalW, plotTree.yOff)

plotMidText(cntrPt, parentPt, nodeTxt)

plotNode(firstStr, cntrPt, parentPt, decisionNode)

secondDict = myTree[firstStr]

plotTree.yOff = plotTree.yOff - 1.0/plotTree.totalD

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

plotTree(secondDict[key],cntrPt,str(key)) #recursion

else: #it's a leaf node print the leaf node

plotTree.xOff = plotTree.xOff + 1.0/plotTree.totalW

plotNode(secondDict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)

plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))

plotTree.yOff = plotTree.yOff + 1.0/plotTree.totalD

#if you do get a dictonary you know it's a tree, and the first element will be another dict

def createPlot(inTree):

fig = plt.figure(1, facecolor='white')

fig.clf()

axprops = dict(xticks=[], yticks=[])

createPlot.ax1 = plt.subplot(111, frameon=False, **axprops) #no ticks

#createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

plotTree.totalW = float(getNumLeafs(inTree))

plotTree.totalD = float(getTreeDepth(inTree))

plotTree.xOff = -0.5/plotTree.totalW; plotTree.yOff = 1.0;

plotTree(inTree, (0.5,1.0), '')

plt.show()

#def createPlot():

# fig = plt.figure(1, facecolor='white')

# fig.clf()

# createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

# plotNode('a decision node', (0.5, 0.1), (0.1, 0.5), decisionNode)

# plotNode('a leaf node', (0.8, 0.1), (0.3, 0.8), leafNode)

# plt.show()

def retrieveTree(i):

listOfTrees =[{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}},

{'no surfacing': {0: 'no', 1: {'flippers': {0: {'head': {0: 'no', 1: 'yes'}}, 1: 'no'}}}}

]

return listOfTrees[i]

#createPlot(thisTree)

数据集下载

隐形眼镜数据集:lenses.txt